Given amount of messages (300% more than usual, i.e. 3 rather than 1) after yesterday’s post, with a common theme in conversation being the divide between what is possible to do privately in experimental mode and at scale in a corporate setting, here’s a quick follow-on to develop the threads.

Summary: In the current industrial landscape, two philosophies are colliding: the Silicon Valley “Engineering State” (iterative chaos) and the German “Lawyerly Society” (methodical procedure). The question is whether the law is a brake or a blueprint for agents in the grid.

This deep-dive was generated via bespoke, tuned instructions in NotebookLM, synthesising a wide range of primary and generated sources on NIS-2, the BNetzA catalogues, and OpenClaw architecture. Subscribers have access to the full podcast and transcript.

Summary

The modern industrial landscape is witnessing a profound collision between two fundamentally different philosophies: the “Engineering State” and the “Lawyerly Society”. The former, epitomised by the chaotic, high-speed iterative culture of Silicon Valley startups, prioritises raw speed and “pushing to production”. The latter, exemplified by the German distribution grid operator, is a world of documented processes, categorised risks, and strict legal compliance.

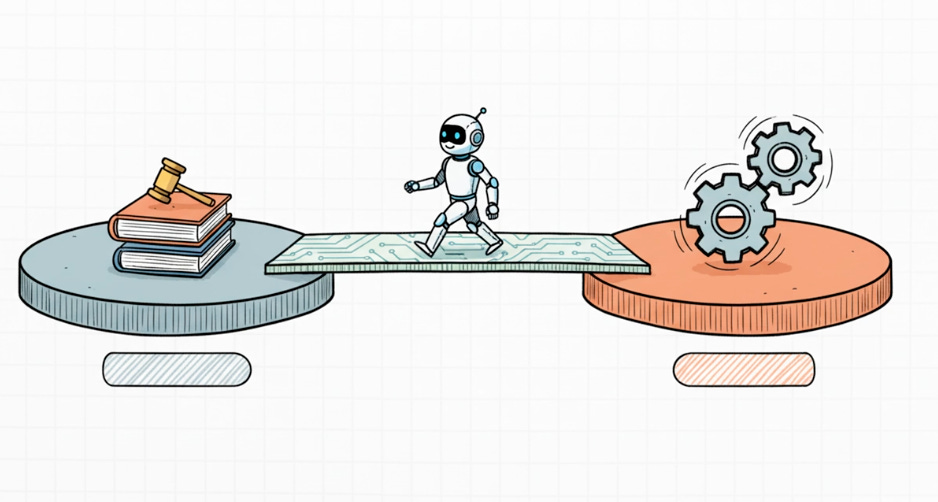

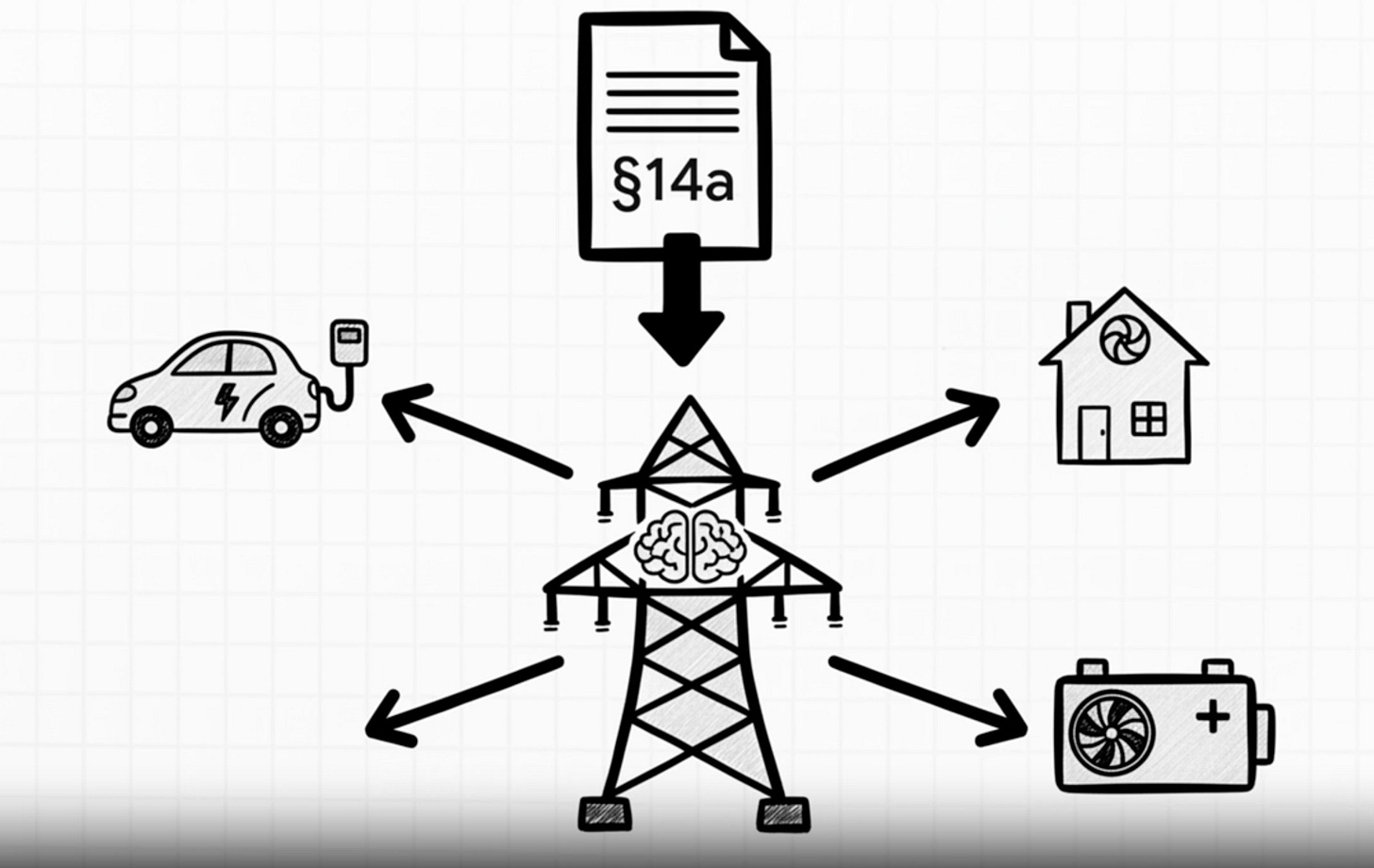

As Germany navigates its energy transition, the central question is whether its dense regulatory frameworks will stifle agentic AI—autonomous entities that execute code rather than just chat—or serve as a unique “master specification” to safely scale these systems to an industrial level.

Point 1: Blueprints vs. Regulatory Fortresses

The debate begins with a fundamental disagreement on the nature of German law.

The Catalyst argues that Germany is not over-regulated but “pre-specified”. For decades, Germany has invested in detailed rules and codes that can be treated as markdown (.md) files—the foundational instructions for AI models. In this view, the law is the ultimate system prompt, providing precise mathematical boundaries for AI agents.

The Critic counters that this creates a “regulatory fortress” that fundamentally paralyzes the fluid nature of agentic AI. They contend that while laws are rigidly static, the philosophy of agentic AI is “software building software,” creating a mechanical mismatch with critical infrastructure laws.

Point 2: The Autonomy Deadlock

A key point of contention is how to audit a system that is designed to change itself.

The Critic points out that by August 2026, the EU AI Act classifies energy safety components as “high risk,” requiring static declarations of trustworthiness. An auditor signs off on a snapshot of compiled code. However, you cannot mathematically certify a system that opens a terminal and rewrites its own code base on a Tuesday afternoon to solve a novel grid congestion issue. The code hash changes, and the certification is instantly void.

The Catalyst proposes the “Admission Gate” (DefenseClaw) as the engineering solution. This governance layer sits between the AI agent and the physical infrastructure. Before any agent-generated code runs, it is automatically scanned in real-time against strict parameters defined by the BSI (Federal Office for Information Security).

Point 3: Decoupling Risk via the “Starbucks Pattern”

How can autonomous agents safely manage critical infrastructure without risking a regional blackout?

The Catalyst suggests using the “Starbucks Pattern” of asynchronous messaging. Just as a cashier taking an order is decoupled from the barista making the coffee, an AI’s optimisation request is decoupled from the grid’s physical response. If an AI hallucinates or fails, the system issues a “compensating action” or falls back to a safe baseline rather than crashing the physical world.

The Critic warns that the stakes for critical infrastructure are “legally existential” compared to a coffee shop. Under the NIS-2 Implementation Act (active since December 2025), management faces personal liability and massive fines for cyber risks, forcing a “culture of no” where any technical failure could lead to personal ruin.

Point 4: Sovereignty and the Works Council

The debate concludes with German labor and geopolitical reality.

The Critic notes that Section 87 of the Works Constitution Act (BetrVG) gives Works Councils mandatory co-determination rights. Because cybersecurity laws require strict logging of who prompted what and when, any AI deployment effectively becomes a workplace monitoring apparatus, leading to grueling negotiations.

The Catalyst argues these hurdles force superior engineering. For example, to comply with GDPR data minimization, engineers use Retrieval Augmented Generation (RAG) to separate the AI’s reasoning engine from sensitive data. Furthermore, NIS-2 forces utilities to build sovereign, hardened AI infrastructure on their own bare-metal servers rather than relying on fragile, foreign cloud APIs.

The Final Verdict: The Synthesis

The ultimate test for Germany is whether the Lawyerly Society can adapt to the machine speed of the Engineering State without breaking its own rules. If the law is treated as a master specification, Germany may uniquely fuse industrial dynamism with its rich tradition of legal and human safeguards. If not, the technology gap between agile “synthetic engineering teams” and rigid legacy infrastructure may become insurmountable.